There is bias (value 1) on each hidden and output layer as depicted in the above image.

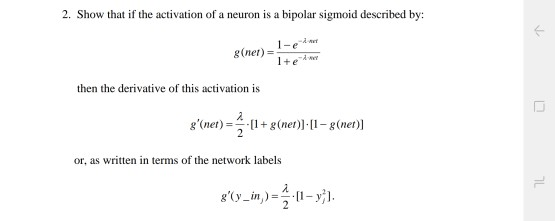

This architecture is adequate for a large number of applications.įigure 1: Single hidden layer neural-network framework One hidden layer with multiple neurons, and one multiple-output layer as illustrated on the following image. This implementation supported the backpropagation algorithm for a single hidden layer neural-network, in which it has one multiple-input layer, the classical book "Fundamentals of Neural Networks" by Laurene Fausett. So it is recommended to read the neural-network fundamentals in advance, e.g. This article will only discuss the implementation aspects, The Function Approximation on the Deep Q-Learning. This post is written to describe how the Backpropagation (BP) algorithm works since it will be used to implement As part of my article about How to implement Deep Reinforcement Learning

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed